Course Description

Advances in

real-time graphics research and the increasing power of mainstream GPUs has generated

an explosion of innovative algorithms suitable for rendering complex virtual

worlds at interactive rates. This course will focus on the interchange of ideas

from game development and graphics research, demonstrating converging

algorithms enabling unprecedented visual quality in real-time. This course will

focus on recent innovations in real-time rendering algorithms used in shipping

commercial games and high-end graphics demos. Many of these techniques are

derived from academic work which has been presented at SIGGRAPH in the past and

we seek to give back to the SIGGRAPH community by sharing what we have learned

while deploying advanced real-time rendering techniques into the mainstream

marketplace.

This course was introduced to SIGGRAPH community last year and it was

extremely well received. Our lecturers have presented several innovative

rendering techniques – and you will be able to find many of those techniques

shine in the upcoming state-of-the-art games shipping this year and even see

the previews of those games in this year’s Electronic Theater. This year we

will bring an entirely new set of techniques to the table, and even more of

them are coming directly from the game development community, along with

industry and academia presenters.

The second-year version of this course will include state-of[1]the-art

real-time rendering research as well as algorithms implemented in several award[1]winning

games and will focus on general, optimized methods applicable in variety of

applications including scientific visualization, offline and cinematic

rendering, and game rendering. Some of the topics covered will include

rendering face wrinkles in real-time; surface detail maps with soft

self-shadowing and fast vector texture maps rendering in Valve’s SourceTM engine; interactive illustrative

rendering in Valve’s Team Fortress 2. This course will cover terrain rendering

and shader network design in the latest Frostbite rendering engine from DICE,

and the architectural design and framework for direct and indirect illumination

from the upcoming CryEngine 2.0 by Crytek. We will also introduce the idea of

using GPU for direct computation of non-rigid body deformations at interactive

rates, along with advanced particle dynamics using DirectX10 API.

We will provide an updated version of these course notes with more

materials about real-time tessellation and noise computation on GPU in

real-time, downloadable from ACM Digital Library and from AMD ATI developer

website prior to SIGGRAPH.

Previous years’ Advances course slides: go here

Syllabus

Welcome and Introduction to Advances in Real-Time Rendering

in 3D Graphics and Games

Natalya

Tatarchuk (AMD)

Real-Time Particle System on the GPU in Dynamic

Environments

Shanon Drone (Microsoft)

Dynamic Deformation Textures

Nico Galoppo (UNC)

Surface Detail Maps with Soft Self-Shadowing

Chris Green (Valve)

Simple, Fast Vector Texture Maps on GPU

Chris Green (Valve)

Real-Time Tessellation on GPU

Natalya

Tatarchuk (AMD)

Tessellation in Viva Piñata

Michael

Boulton, Rare

Illustrative Rendering in Team Fortress 2

Jason L. Mitchell (Valve)

Frostbite Rendering Architecture and Case Study: Terrain

Rendering

Johan

Andersson (DICE)

Real-Time Wrinkles

Chris Oat (AMD)

Finding Next Gen - CryEngine2

Martin

Mittring (Crytek)

Closing Remarks

Natalya

Tatarchuk (AMD)

Prerequisites

This course is intended for graphics researchers,

game developers and technical directors. Thorough knowledge of 3D image

synthesis, computer graphics illumination models, the DirectX and OpenGL API

Interface and high-level shading languages and C/C++ programming are assumed.

Intended Audience

Technical

practitioners and developers of graphics engines for visualization, games, or

effects rendering who are interested in interactive rendering.

Course Organizer

Natalya Tatarchuk is a staff research engineer leading the

research team in AMD's 3D Application Research Group, where pushes the GPU

boundaries investigating innovative graphics techniques and creating striking

interactive renderings leading the research team. In the past she led the

creation of the state-of-the-art realistic rendering of city environments in

ATI demo “ToyShop” and has been the lead for the

tools group at ATI Research. Natalya has been in the graphics industry for

years, having previously worked on haptic 3D modeling software, scientific

visualization libraries, among others. She has published multiple papers in

various computer graphics conferences and articles in technical book series

such as ShaderX and Game Programming Gems and has

presented talks at SIGGRAPH and at Game Developers Conferences worldwide,

amongst others. Natalya holds BAs in Computers Science and Mathematics from

Boston University.

Talks

Real-Time Particle Systems on the GPU in

Dynamic Environments

Abstract:

This

presentation details methods for simulating advanced particle systems entirely

on the GPU, enabling complex interactions between particles and their

environments in real time. The approach leverages non-parametric particle

systems, which integrate acceleration and velocity over time to allow for

dynamic, responsive behavior beyond the constraints of analytical motion paths.

Key techniques include GPU-based storage and double buffering of particle

states, N-body interactions using force splatting, flocking behaviors with

collision avoidance and alignment via mip-map

averaging, and environmental reactivity through both spherical and

arbitrary-object collision handling using volume textures. The talk also

explores bidirectional interactions, where particles influence scene

appearance, such as painting effects, while maintaining high performance. By

exploiting modern GPU features such as geometry shaders, texture arrays, and

instancing, this work demonstrates how particle systems can achieve high

fidelity, scalability, and responsiveness for games, simulations, and

interactive applications.

Speaker Bio:

Shanon Drone is a software

developer at Microsoft. Shanon joined Microsoft in

2001 and has recently been working on Direct3D 10 samples and applications. He

spends a great deal of his time researching and implementing new and novel

graphics techniques.

Materials: PowerPoint

Slides (5.6 MB), PDF

Slides (3.3 MB), Course

Notes (1.9 MB)

https://doi.org/10.1145/1281500.1281670

Dynamic

Deformation Textures

Abstract:

This

presentation introduces a GPU-accelerated framework for simulating deformable

models in real time through the use of dynamic deformation textures. The method

focuses on efficiently modeling complex surface deformations resulting from

contact, such as dents, footprints, and other localized changes, without

requiring high-resolution mesh modifications. By encoding deformation data into

textures and leveraging parallel GPU processing, the approach achieves high

visual detail with minimal computational overhead, making it well suited for

interactive applications such as games and simulations. The talk covers the

underlying physical modeling, data representation strategies, and rendering

techniques, along with examples demonstrating integration into existing

graphics pipelines. This technique enables realistic, responsive deformations

that can be applied to a variety of materials and geometries, maintaining both

performance and visual fidelity across diverse hardware platforms.

Speaker Bio:

Nico Galoppo is currently a PhD.

student in the GAMMA research group at the UNC Computer Science Department,

where his research is mainly related to physically based animation and

simulation of rigid, quasi-rigid and deformable objects, adaptive dynamics of

articulated bodies, hair rendering, and many other computer graphics related

topics. He also has experience with accelerated numerical algorithms on

graphics processors, such as matrix decomposition. His advisor is Prof. Ming C.

Lin and he is also in close collaboration with Dr. Miguel A. Otaduy v (ETHZ). Nico has published several peer-reviewed

papers in various ACM conference proceedings had he has presented his work at

the SIGGRAPH and ACM Symposium of Computer Animation conferences. Nico grew up

in Belgium and holds an MSc in Electrical Engineering from the Katholieke Universiteit Leuven.

Materials: Course

Notes (0.98 MB)

https://doi.org/10.1145/1281500.1281669

Surface Detail Maps with Soft

Self-Shadowing

Abstract:

This presentation introduces an enhanced radiosity normal mapping

technique that integrates directional self-shadowing into bump-mapped surfaces

without increasing texture memory usage or reducing performance. By pre-baking

the lighting basis directly into bump map data, the method allows both static

radiosity and dynamic light sources to benefit from soft, directionally

accurate occlusion. The approach encodes directional ambient occlusion directly

into the RGB channels of the normal map, enabling realistic shading effects

such as bent normals and diffuse self-shadowing while

improving anti-aliasing, filtering, and numeric precision. The system is

compatible with existing art pipelines, works on older hardware, and can

generate maps from procedural, modeled, or captured height data. Used extensively

in titles such as Half-Life 2: Episode 2 and Team Fortress 2,

this technique provides a practical, artist-friendly solution for achieving

high-quality surface detail and lighting realism in real-time rendering.

Speaker Bio:

Chris Green is a software engineer at Valve and has

been working on the Half-Life 2 series and Day of Defeat. Prior

to joining Valve, Chris Green worked on such projects as Flight Simulator II,

Ultima Underworld, the Amiga OS, and Magic: The Gathering Online.

He ran his own development studio, Leaping Lizard Software, for 9 years.

Materials: PDF

Slides (0.5MB), Course

Notes (0.15 MB)

Simple, Fast Vector Texture Maps on GPU

Abstract: A simple and

efficient method is presented which allows improved rendering of glyphs

composed of curved and linear elements. A distance field is generated from a

high-resolution image and then stored into a channel of a lower-resolution

texture. In the simplest case, this texture can then be rendered simply by

using the alpha-testing and alpha-thresholding feature of modern GPUs, without

a custom shader. This allows the technique to be used on even the lowest-end 3D

graphics hardware.

With the use of programmable shading, the technique is extended to

perform various special effect renderings, including soft edges, outlining,

drop shadows, multi-colored images, and sharp corners.

Speaker Bio:

Chris Green is a software engineer at Valve and has

been working on the Half-Life 2 series and Day of Defeat. Prior

to joining Valve, Chris Green worked on such projects as Flight Simulator II,

Ultima Underworld, the Amiga OS, and Magic: The Gathering Online.

He ran his own development studio, Leaping Lizard Software, for 9 years.

Materials: PowerPoint

Slides (5.3 MB), PDF

Slides (0.9MB), Course

Notes (0.25 MB)

https://doi.org/10.1145/1281500.1281665

Real-Time

Tessellation on GPU

Abstract:

This presentation

explores the use of hardware tessellation to achieve high-detail, film-quality

character rendering in real time. It describes a pipeline in which coarse

artist-created control meshes are dynamically subdivided on the GPU, enabling

adaptive triangle density based on camera distance and screen-space error

metrics. The approach supports displacement mapping, detailed surface shading,

and seamless integration of complex normal and bump maps, while maintaining

consistent performance across varying levels of detail. Key topics include

tessellation algorithms, displacement mapping workflows, efficient GPU data

management, and rendering optimizations for dynamic characters. By leveraging

dedicated tessellation hardware, this technique bridges the gap between

offline-quality models and interactive performance, allowing for richly

detailed and responsive characters in modern game engines.

Speaker Bio:

Natalya Tatarchuk is a staff research engineer leading the

research team in AMD's 3D Application Research Group, where pushes the GPU

boundaries investigating innovative graphics techniques and creating striking

interactive renderings leading the research team. In the past she led the

creation of the state-of-the-art realistic rendering of city environments in

ATI demo “ToyShop” and has been the lead for the

tools group at ATI Research. Natalya has been in the graphics industry for

years, having previously worked on haptic 3D modeling software, scientific

visualization libraries, among others. She has published multiple papers in

various computer graphics conferences and articles in technical book series

such as ShaderX and Game Programming Gems and has

presented talks at SIGGRAPH and at Game Developers Conferences worldwide,

amongst others. Natalya holds BAs in Computers Science and Mathematics from

Boston University.

Materials: PowerPoint Slides (20 MB),

PDF Slides (14.8 MB)

https://doi.org/10.1145/1281500.1361219

Tessellation

in Viva Piñata

Abstract:

This

presentation describes the tessellation techniques developed for Viva Piñata

on the Xbox 360 to manage the game’s high scene complexity and demanding GPU

workloads. Despite its stylized look, the title features unified shadowing,

volumetric rendering, and expensive shaders, making efficient level-of-detail

management essential. The talk focuses on edge-based tessellation of the game’s

central “diggable surface,” dynamically adjusting tessellation factors based on

screen-space edge length to preserve visual quality near the player while

rapidly reducing detail with distance. The method also incorporates

optimizations such as disabling tessellation for off-screen tiles, handling

attribute interpolation issues with auxiliary textures, and using vertex shader

filtering to avoid artifacts at terrain-water transitions. By combining visual

fidelity with performance-conscious design, the approach ensures consistent

frame rates while maintaining the game’s rich and interactive environments.

Speaker Bio:

Michael (“Mike”) Boulton is a graphics engineer who worked at

Rare/Microsoft Game Studios and later at 343 Industries. He presented

“Tessellation in Viva Piñata” at the SIGGRAPH 2007 “Advanced Real-Time

Rendering in 3D Graphics and Games” course while a Senior Software Engineer at

Rare/MGS.

His shipped credits include Viva Piñata (2006), Banjo-Kazooie: Nuts

& Bolts (2008), Halo: Reach (2010), Halo 4 (2012), and Halo 5 projects,

culminating in Graphics Engineering leadership roles at 343 Industries.

Materials: PowerPoint Slides (4 MB),

PDF Slides (0.8 MB), video (25 MB)

https://doi.org/10.1145/1281500.1361219

Illustrative Rendering in Team Fortress

2

Abstract: We present a set of artistic choices and

novel real-time shading techniques which support each other to enable the

unique rendering style of the game Team Fortress 2. Grounded in the

conventions of early 20th century commercial illustration, the look of Team

Fortress 2 is the result of tight collaboration between artists and

engineers. In this paper, we will discuss the way in which art direction and

technology choices combine to support artistic goals and gameplay constraints.

In addition to achieving a compelling style, the shading techniques are

designed to quickly convey geometric information using rim highlights as well

as variation in luminance and hue, so that game players are consistently able

to visually "read" the scene and identify other players in a variety

of lighting conditions.

Speaker Bio:

Jason L. Mitchell is a software developer at Valve, where

he works on real-time graphics techniques for all of Valve's projects. Prior to

joining Valve in 2005, Jason worked at ATI for 8 years, where he led the 3D

Application Research Group. He received a BS in Computer Engineering from Case

Western Reserve University and an MS in Electrical Engineering from the

University of Cincinnati.

Materials: PowerPoint

Slides (10.2 MB), PDF Slides (2.8 MB),

Course

Notes (0.4 MB)

https://doi.org/10.1145/1274871.1274883

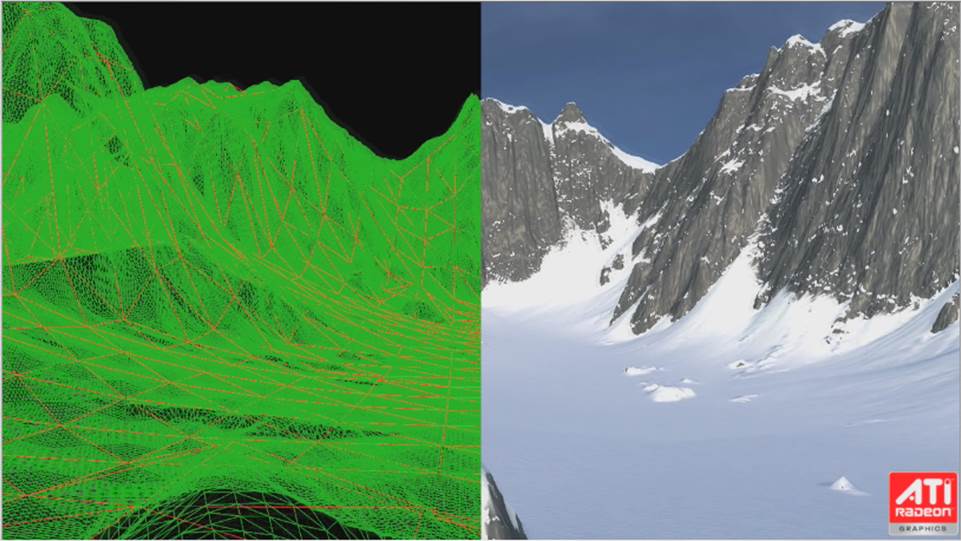

Frostbite Rendering Architecture and Case Study:

Terrain Rendering

Abstract:

This

presentation explores modern techniques for efficient, high-quality terrain

rendering, focusing on scalability from small-scale environments to expansive,

real-time landscapes. It examines the core challenges of rendering large

terrains - such as memory management, level-of-detail (LOD) transitions,

geometric complexity, and texturing - while maintaining interactive frame

rates. The talk presents a structured pipeline that integrates GPU-friendly

data structures, chunked LOD meshes, and view-dependent refinement strategies

to optimize both performance and visual fidelity. Special attention is given to

handling vast heightfield data sets, procedural detail generation, and

minimizing popping artifacts through geomorphing and

texture blending. By combining algorithmic optimizations with

hardware-conscious design, the approach enables robust terrain rendering

suitable for simulations, games, and virtual environments, delivering

consistent results across varied hardware platforms.

Speaker Bio:

Johan Andersson is a self-taught senior software

engineer/architect in the central technology group at DICE. For the past 7

years he has been working on the rendering and core engine systems for games in

the RalliSport and Battlefield series. He now drives the

rendering side of the new Frostbite engine for the pilot game Battlefield:

Bad Company (Xbox 360, PS3). Recent contributions include a talk at GDC

about graph-based procedural shading.

Materials: PowerPoint Slides (18.2 MB),

PDF Slides (8.4 MB), Course Notes (1.6

MB)

https://doi.org/10.1145/1281500.128166

Animated Wrinkle Maps

Abstract: An efficient method for rendering animated

wrinkles on a human face is presented. This method allows an animator to

independently blend multiple wrinkle maps across multiple regions of a textured

mesh such as the female character shown in above. This method is efficient in

terms of computation as well as storage costs and is easily implemented in a

real-time application using modern programmable graphics processors.

Speaker Bio:

Chris Oat is a staff engineer in AMD's 3D Application

Research Group where he is the technical lead for the group's demo team. In

this role, he focuses on the development of cutting-edge rendering techniques

for leading edge graphics platforms. Christopher has published several articles

in the ShaderX and Game Programming Gems series and

has presented his work at graphics and game developer conferences around the

world.

Materials: PowerPoint

Slides (13 MB), PDF Slides (2.8 MB),

Course

Notes (0.15 MB)

https://doi.org/10.1145/1281500.128166

Finding

Next Gen - CryEngine2

Abstract:

This presentation

examines the architectural and rendering innovations behind CryEngine 2,

designed to deliver next-generation real-time graphics for large-scale

interactive worlds. It covers the engine's unified rendering pipeline, dynamic

lighting and shadowing systems, and advanced material framework that supports

complex shader combinations. The talk highlights techniques for high-fidelity

vegetation rendering, seamless indoor-outdoor transitions, and efficient

streaming of massive environments. Special emphasis is placed on deferred

lighting, parallax occlusion mapping, volumetric effects, and procedural

systems that enhance realism while maintaining performance. By integrating

scalability across hardware tiers with artist-friendly workflows, CryEngine

2 demonstrates how cutting-edge rendering features can be balanced with the

practical demands of production, enabling richly detailed and immersive virtual

environments.

Speaker Bio:

Martin Mittring is a software

engineer and member of the R&D staff at Crytek. Martin started his first

experiments early with text-based computers, which led to a passion for

computers and graphics in particular. He studied computer science and worked in

one other German games company before he joined Crytek. During the development

of Far Cry he was working on improving the PolybumpTM

tools and became lead network programmer for that game. His passion for

graphics brought him back to former path and so he became lead graphics

programmer in R&D. Currently he is busy working on the next iteration of

the engine to keep pushing future PC and next-gen console technology.

Materials: PowerPoint Slides

(13 MB), PDF

Slides (2.3 MB), Course

Notes (1.5 MB)

Direct contact:

![]()